How do humans pass on complex concepts and knowledge to subsequent generations? In his research, UC Berkeley cognitive scientist Bill Thompson uses computational methods and large-scale experiments to understand problems like knowledge transmission, the universality of language categories, and the social aspects of human problem-solving.

Thompson is an Assistant Professor in the UC Berkeley Department of Psychology and Director of the Experimental Cognition Laboratory. Thompson is also affiliated with the Institute for Cognitive and Brain Sciences and the Program in Cognitive Science.

In this visual interview, conducted by Matrix Postdoctoral Fellow Julia Sizek, we focus on one of Thompson’s most recent research projects, which considers how humans can become successful at a problem-solving task. The findings from the research were published in Science.

One of the big questions you grapple with is how people learn and what the relationship is between learned traits and “universal” human traits. What new tools and methods have emerged to conduct this research?

Psychological research can help us understand the basic cognitive processes involved in learning, memory, and reasoning, but traditional experiments were often limited to simple judgments of very simple stimuli and to small groups of participants. With modern computational methods, we can study these aspects of the mind ever more precisely. My research tries to make use of these emerging tools, and combine them with large-scale behavioral experiments to learn more about human language and cognition, especially our capacity to learn from and reason about each other.

For example, our recent studies have used machine learning methods such as neural networks to analyze large-scale behavioral datasets, uncovering patterns in the strategies people use to solve problems in groups. Using these methods, we’re able to study entire networks of participants, and leverage this larger-scale data to understand the underlying algorithmic structure of how people reason about more complex problems. That’s significant because it brings us much closer to the kinds of problems people face in the real world; moreover, understanding cognition in computational terms allows us to translate new findings into more human-like artificial intelligence systems.

In a study on cultural learning, you focus on a question about how humans pass along strategies for performing tasks. In an experiment, you asked participants to try a difficult sorting task. Can you describe the task and how the experiment works?

Suppose I lay out six images on the table in front of you. I tell you that each image has a number on the back from 1 to 6. Your task is to put the images in order from left (1) to right (6). If you can get the order correct, I will pay you.

The trick is that you have to do this without ever seeing the numbers. All you can do is choose pairs of images to compare: if the pair you choose is out of order, I will swap their positions. However, every comparison you make reduces the eventual payout.

It might sound like a magic trick, but this is a difficult puzzle to solve. Computer scientists have studied the algorithms capable of solving this kind of problem extensively. Even the simpler algorithms can be quite counterintuitive.

We studied this task because we are interested in how people discover solutions to difficult problems – a key ingredient of all human societies. We asked people to solve the puzzle without any kind of training, and write down any insights they had into what makes a good or bad solution. The messages people wrote were handed to the next group of participants, who also tried to solve the problem and wrote down their own insights.

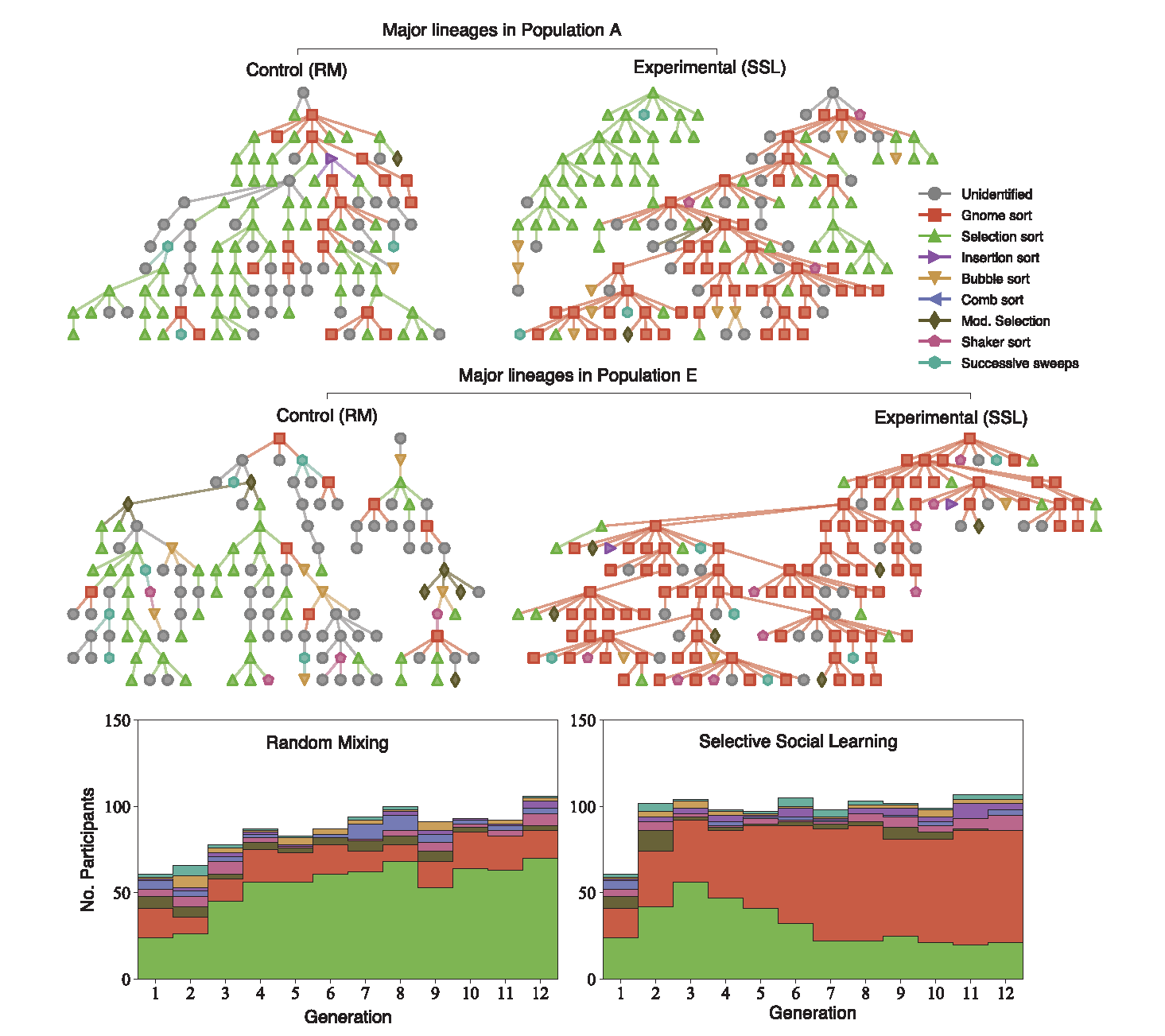

Over the course of the experiment, thousands of participants tried to solve the problem and transmitted information to each other about their successes and failures. Over time, the strategies people discovered evolved to become more efficient, but also more complex. By the end of the experiment, people had discovered some highly unlikely and very efficient algorithms – even some algorithms that have been discovered and documented in computer science!

In this process, participants were able to learn from others – and choose who to learn from. How did research participants decide who to choose as their teachers?

The key to this process of cumulative improvement, we found, was the ability to be selective about whose advice you seek out. If people were paired up randomly with teachers, there was no way for high-performers to pass on their knowledge. Rare discoveries of innovative solutions often went extinct because people were never exposed to them.

Instead, if people were allowed to choose a teacher based on the solutions the teacher had discovered, then many people were exposed to innovative discoveries, even when they were rare.

How did participants sort between the strategies when they were able to pick between teachers?

One of the most interesting things that the experiment illustrated was a kind of tradeoff in the accumulation of knowledge: as people’s strategies became more efficient over time, they also became harder for the next generation to learn. People at later generations in the experiment inherited more complex algorithms, but this meant that they often had a harder time acquiring this inherited knowledge. Another way of putting this is that as time goes on, we need to invest more and more in mechanisms that support learning and preserve knowledge.

Cognitive psychology has traditionally focused on the idea of fixed, universal cognitive functions such as an innate ability to learn language or distinct types of memory. But there is a greater appreciation now for the role that learning in culturally embedded social contexts plays in the construction of our cognition, shaping the way we conceptualize even basic aspects of the world. The culture we grow up in provides us with counting systems, maps, calendars, categories of kinship relationships, and many other cognitive tools for thought. Our hope is that research like this can help highlight some of the ways that cognition is always evolving, and how we can study aspects of that process experimentally and from a computational perspective.

How might your work have a broader application for explaining how strategies spread that are harder to learn, but more successful?

The trade-off between a person’s ease of learning a strategy and their efficiency and success at completing a task can help us understand the importance of mechanisms that mitigate barriers to knowledge. One area where this is potentially quite important is in the design of computational technologies that capture and transmit knowledge – social networking algorithms, large language models, or educational technologies, for example.

Understanding the tradeoffs that arise when thousands of people start to learn from each other via algorithms is a core challenge for contemporary psychological research in my view, especially in the context of cognitive development among children and young adults. For example, the use of large language models to support education offers significant potential for personalization and increased access to knowledge, but at the same time these systems have the potential to reinforce biases and reproduce harmful content that was present in their training data. More generally, increasing mediation of human interaction by machine learning systems has the potential to amplify misinformation, promote simplification over understanding, and distort our impression of what other people believe.

Here at UC Berkeley, one of my goals is to create the research infrastructure and training that new generations of students in behavioral science need in order to address these challenges with cutting-edge methods, including computational modeling, machine learning, and high-powered, large-scale experiments. Broadening access to these innovations is critical.